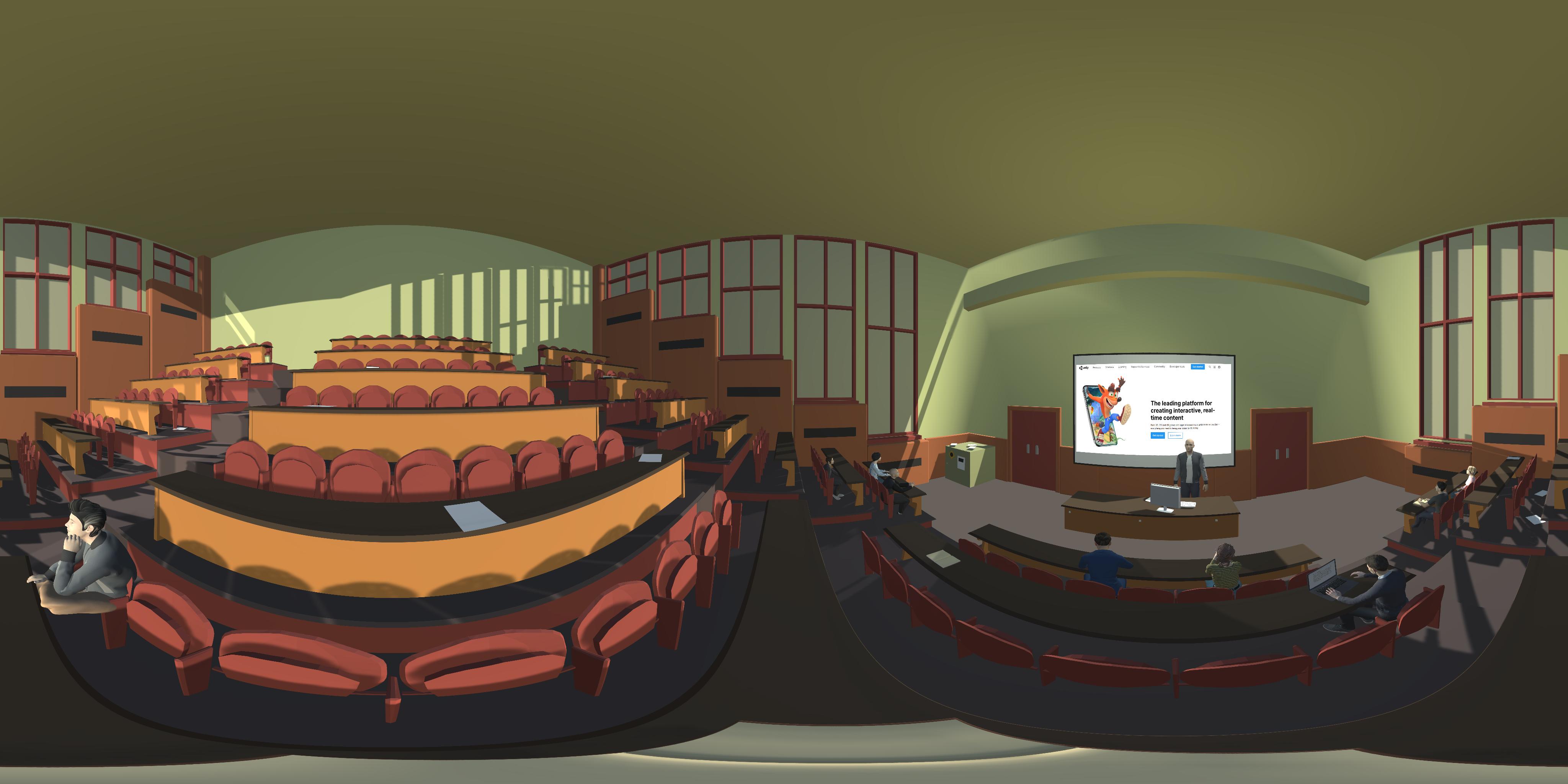

Task 3 SART (Sustained Attention to Response Task) Lecture Hall

Aims:

To understand users ability to focus on a visual sustained attention task and their response strategy, response inhibition, or motor impulsivity.

Groups of participants:

-Controls

-People with ADHD/ADHD traits

Task Details:

For this tasks, there will be sounds from lecturer who will explain a topic, sounds from students like coughing, sneezing, talking, writing, paper flipping. At a certain point a paper plane will fly and land in ground (with a sound). In total there will be 225 digits shown. Each digit will be presented 25 times (225/9) randomly after a delay of 1.15 seconds each. Each digit is shown for 1.15 seconds.

Questionnaire:

Q1: Pressing the button for the wrong number affected my next responses.

Q2: The lecturer and other students were distracting me during this task.

Q3: I think I did very well in completing this task.

Q4: I think I didn’t do very well in completing this task.

Q5: The task was interesting and was similar to a scenario that can happen in our day-to-day life.

Q6: I felt present and involved in VR.

Data Collected:

-From the task (only when they press the button of the controller, we save username, digit shown, accuracy if they were correct/wrong, reaction time (from digit shown to their button press), timestamp)

-Eye tracker VR Add on (Pupil Labs)

-EEG VR Add on (Looxid Link)

-From the questionnaire

Hardware:

-Alienware m15 Gaming Laptop

-HTC Vive Pro

-Fovitec 2x 7'6" Light Stand VR Compatible Kit

-Pupil Labs

-Looxid Link

Software:

-Unity

-Unity Asset Store

-Daz3D

-Blender

-IBM TTS Api

Programming Languages used:

-C#

-Python using Spyder in Anaconda

-Jupyter Notebook

-MySQL

-PHP API